Today, Artificial Intelligence (AI) is deeply embedded in corporate processes. Companies use neural networks for marketing automation, candidate scoring, contract generation, and customer support. However, the "Wild West" era of AI in Europe has officially come to an end.

The AI Act, adopted by the European Union, has entered its active enforcement phase. It is the world's first comprehensive set of rules that transforms AI from a zone of technological creativity into a zone of strict legal oversight.

In this article, we break down how the law affects businesses, which AI systems are targeted, and how to avoid colossal fines.

There is a dangerous misconception that the AI Act only regulates IT giants like OpenAI or Google. This is not the case. The law applies to any company that deploys AI solutions in the EU market or if the output of the AI is used within Europe.

Even if you don't write code but simply purchase ready-made software, you are a "deployer" (user) and bear responsibility. Risks arise if your business uses AI for:

Screening resumes, evaluating candidates, and HR analytics;

Automated customer scoring and creditworthiness assessment;

Analysis of legal documents and contract generation (Legal Tech);

Processing customer inquiries via smart chatbots.

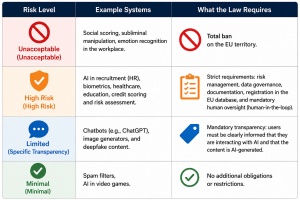

The AI Act is based on a risk-oriented approach. The more dangerous the field of AI application is for human rights, the stricter the requirements.

A serious vulnerability for businesses today is "Shadow AI"—when employees upload confidential data into public neural networks without management's knowledge.

By entering commercial contract texts, personal client data, or financial reports into a prompt for summarization, a company automatically violates not only the AI Act but also the strict GDPR regulations. Any data leak through AI models can result in double fines from European regulators.

Important: The use of AI requires an immediate review of the corporate Privacy Policy and the implementation of internal AI Governance.

The market for AI assistants for lawyers is growing, but advertising such services now requires caution. AI does not have a law license and is prone to "hallucinations" (fabricating facts).

If your platform helps automate legal processes, avoid trigger phrases in marketing:

❌ "AI completely replaces the lawyer"

❌ "Guaranteed legal integrity from our AI"

❌ "Final legal opinion in 1 minute"

Instead, position the product as a tool to increase human efficiency, leaving the final decision to a qualified specialist.

Sanctions for non-compliance with the new EU regulation are differentiated and can be fatal for a business:

Up to €35 million or 7% of global turnover — for using prohibited AI systems.

Up to €15 million or 3% of global turnover — for violating requirements for high-risk AI systems.

Up to €7.5 million or 1% of turnover — for providing incorrect or misleading information to regulators.

To minimize legal risks, companies are recommended to take five sequential steps:

Conduct an AI Audit (AI Mapping): Inventory all AI tools used in the company and determine their risk class.

Develop an AI Policy: Implement internal rules for employees—clearly state what data can be uploaded to AI and what is strictly prohibited.

Ensure Human Control: Review automated decision-making processes (especially in HR and finance). Ensure the final word remains with a human.

Update Contracts (Data Processing Agreements): If you use third-party AI services, check their contracts for compliance with EU standards and GDPR.

Train the Team: Conduct training on digital hygiene and safe work practices with generative AI.